Welcome to Only Influencers

A Thriving Community of Email Industry Professionals

dedicated to knowledge and networking

and Co-Producer of Email Innovations World

| Join Only Influencers | Free Email Newsletter |

| Upcoming Webinar | On-Demand Webinars |

|

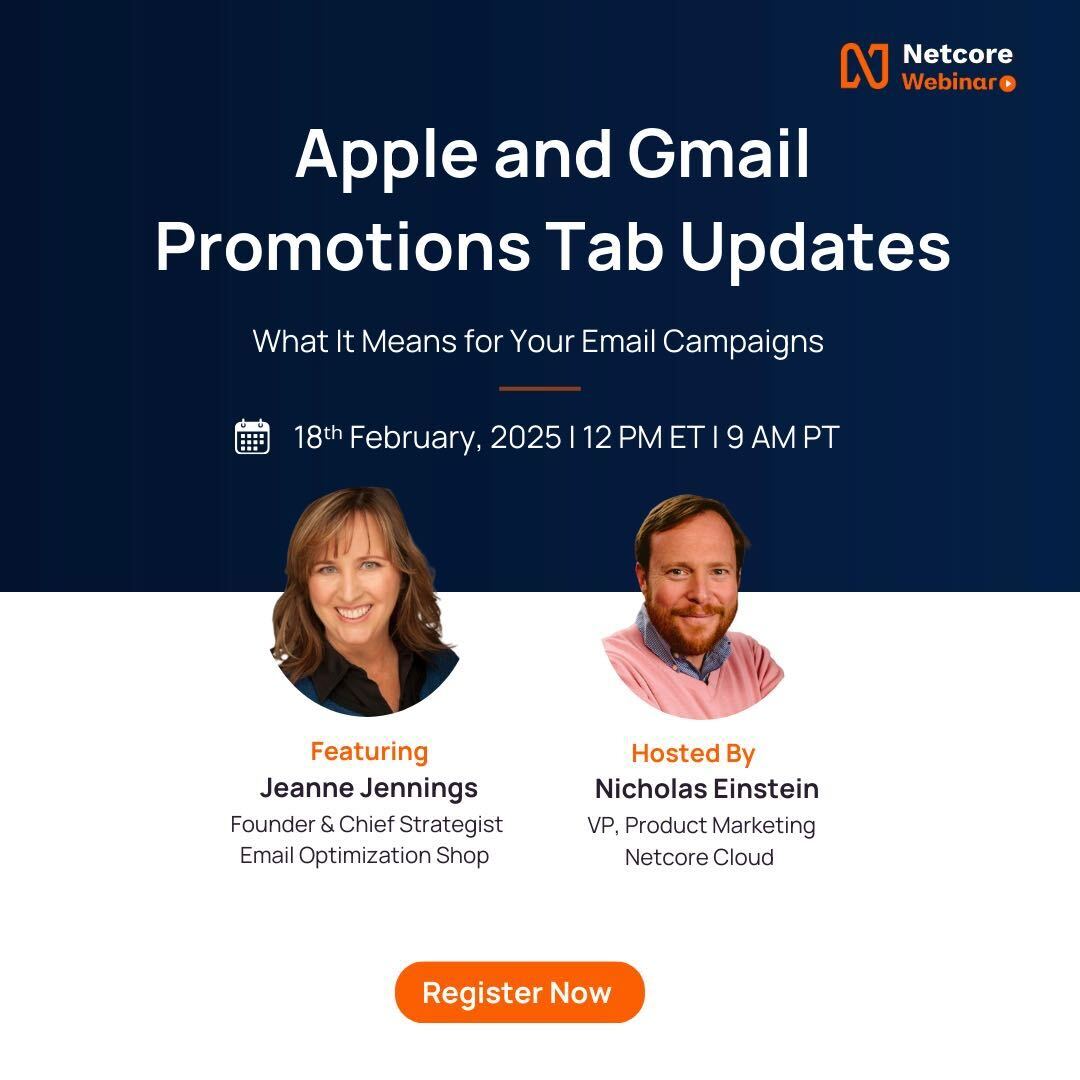

Apple and Gmail Promotions Tab Updates |

Checklist for Email Marketing Success in 2025: |

| Upcoming OI-Members-Only Live Zoom Discussions | |

|

September 4, 2025 OI Member Chris Marriott, Email Connect September 11, 2025 OI Member Elizabeth Jacobi, Mochabear Marketing September 18, 2025 OI Member Ryan Phelan, RPE Origin September 25, 2025 TBD October 2, 2025 OI Member Guy Nagar, Kuverto October 9, 2025 OI Member Chad S. White, Oracle Digital Experience Agency |

|

| The OI Email Metrics Project | |

|

The OI Email Metrics Project | |

How to resolve AdBlock issue?

How to resolve AdBlock issue?